Projects

Visual Storytelling Research #1

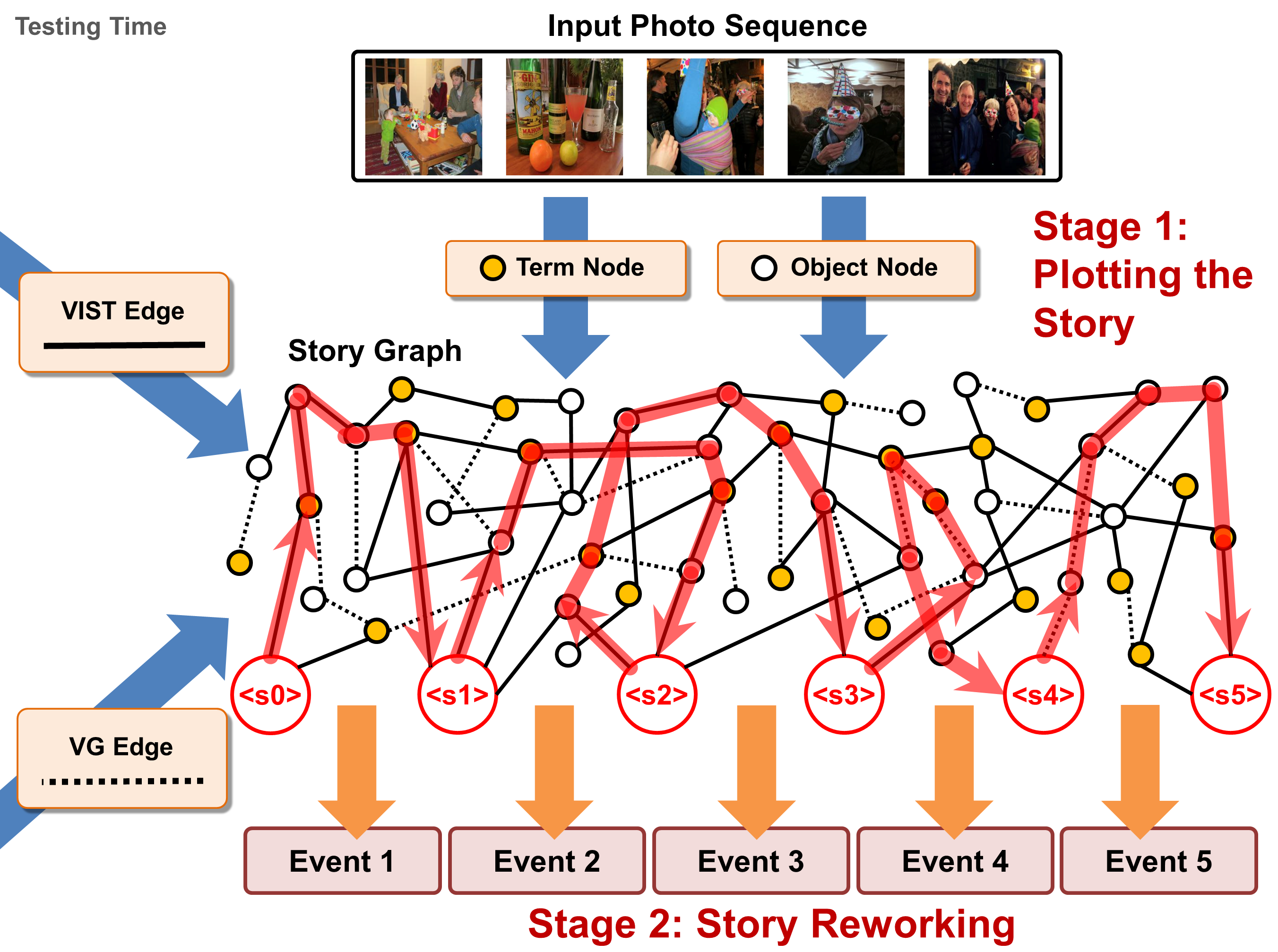

Writing a coherent and engaging story is not easy. Creative writers use their knowledge andworldview to put disjointed elements together to form a coherent storyline, and work andrework iteratively toward perfection. We introduce PR-VIST, a framework that represents the input image se-quence as a story graph in which it finds thebest path to form a storyline. PR-VIST then takes this path and learns to generate the final story via a re-evaluating training process.

[PAPER]

Writing a coherent and engaging story is not easy. Creative writers use their knowledge andworldview to put disjointed elements together to form a coherent storyline, and work andrework iteratively toward perfection. We introduce PR-VIST, a framework that represents the input image se-quence as a story graph in which it finds thebest path to form a storyline. PR-VIST then takes this path and learns to generate the final story via a re-evaluating training process.

[PAPER]

Visual Storytelling Demo Research

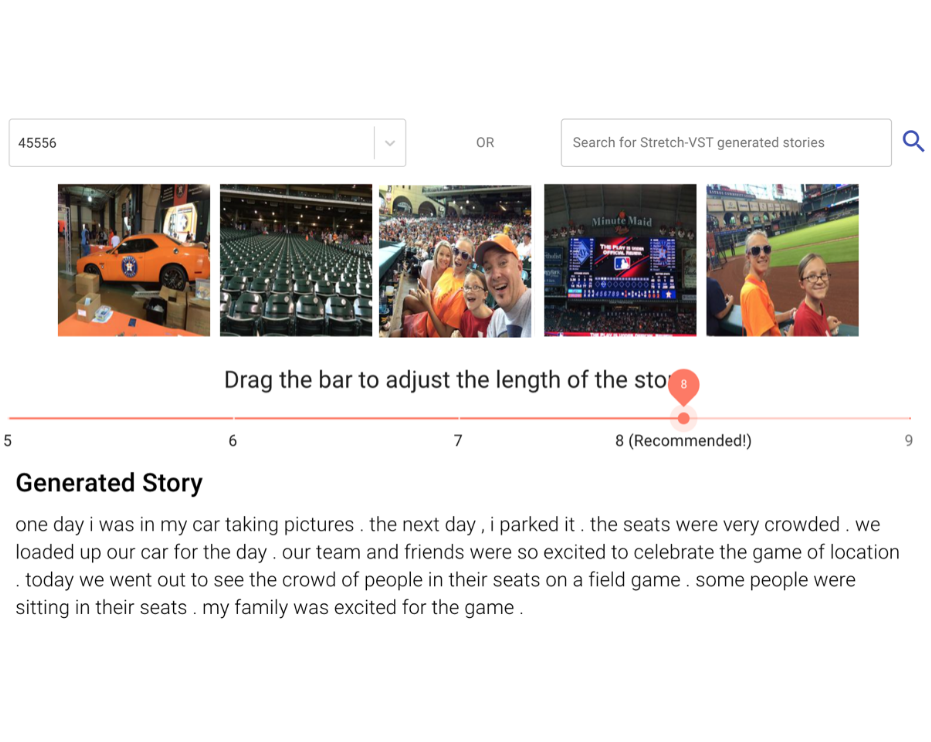

Distilled nouns and verbs from a sequence of images and utilized knowledge graph to find the important relations between nouns. Dynamically performed recurrent Transformer to generated stories with diverse length. The human evaluation showed that our model can generate longer stories, even when the input images are incohert.

[DEMO] [VIDEO]

Distilled nouns and verbs from a sequence of images and utilized knowledge graph to find the important relations between nouns. Dynamically performed recurrent Transformer to generated stories with diverse length. The human evaluation showed that our model can generate longer stories, even when the input images are incohert.

[DEMO] [VIDEO]

COVID-19 X Essential Workers

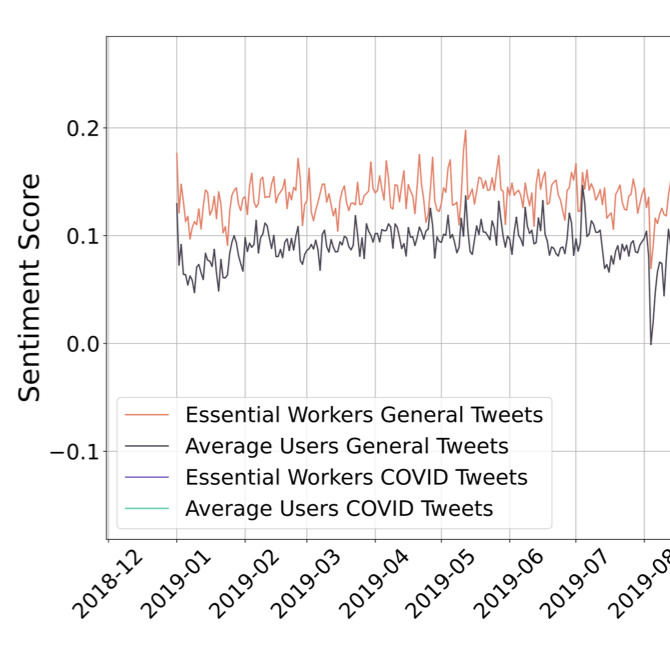

The Covid-19 pandemic has led to large-scale lifestyle changes andincreased social isolation and stress on a societal level. This hashad a unique impact on US “essential workers” (EWs). We examine the use of Twitter by EWs as a step toward understanding the pandemic’s impact on their mental well-being, as compared to the populationas a whole.

[PAPER]

The Covid-19 pandemic has led to large-scale lifestyle changes andincreased social isolation and stress on a societal level. This hashad a unique impact on US “essential workers” (EWs). We examine the use of Twitter by EWs as a step toward understanding the pandemic’s impact on their mental well-being, as compared to the populationas a whole.

[PAPER]

Visual Question Generation

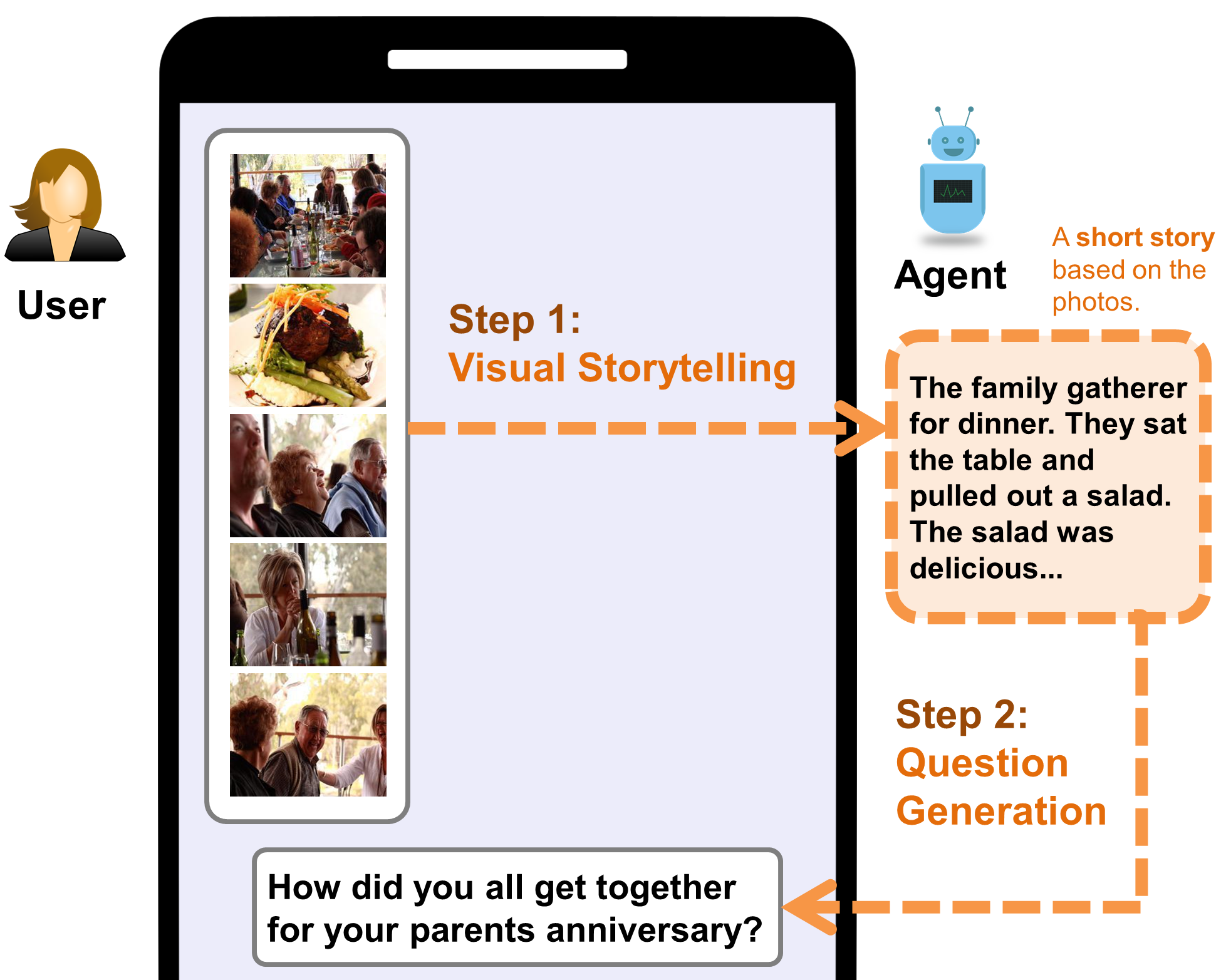

An engaging and provocative question can open up a great conversation. In this work, we explore a novel scenario: a conversation agent views a set of the user’s photos (for ex-ample, from social media platforms) and asks an engaging question to initiate a conversation with the user.

[PAPER]

An engaging and provocative question can open up a great conversation. In this work, we explore a novel scenario: a conversation agent views a set of the user’s photos (for ex-ample, from social media platforms) and asks an engaging question to initiate a conversation with the user.

[PAPER]

Visual Storytelling Research #2

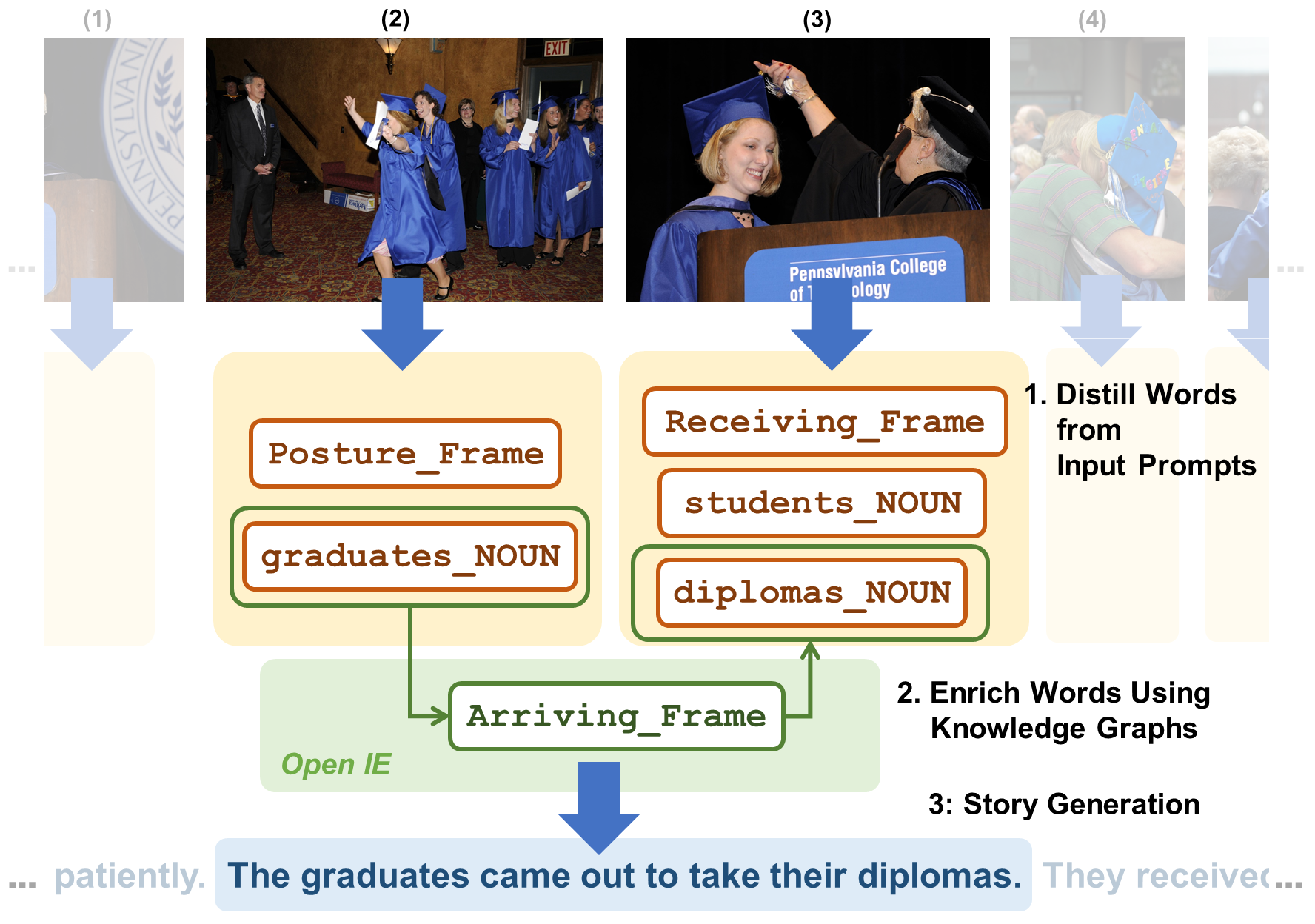

KG-Story, a three-stage framework that allows the story generation model to take advantage of external Knowledge Graphs to produce interesting stories. KG-Story distills a set of representative words from the input prompts, enriches the word set by using ex-ternal knowledge graphs, and finally generates stories based on the enriched word set.

[PAPER]

KG-Story, a three-stage framework that allows the story generation model to take advantage of external Knowledge Graphs to produce interesting stories. KG-Story distills a set of representative words from the input prompts, enriches the word set by using ex-ternal knowledge graphs, and finally generates stories based on the enriched word set.

[PAPER]

Emoji Prediction

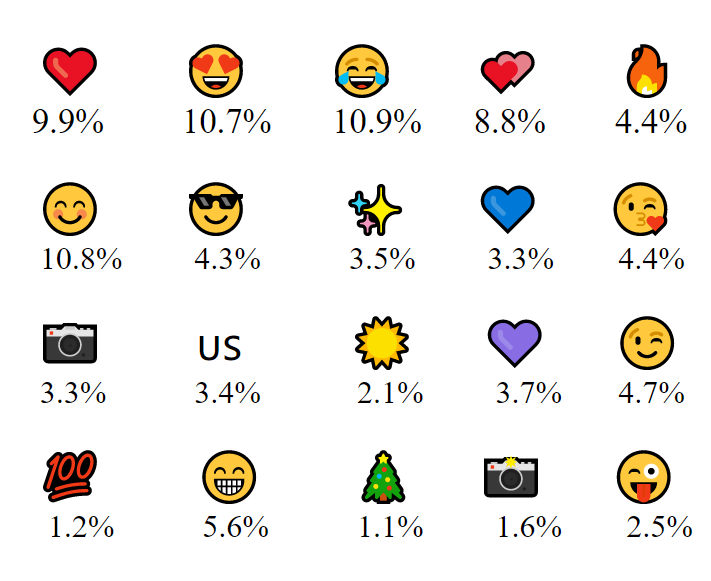

Hashtags and Application Sources like Android, etc. are two features which we found to be important yet underused in emoji prediction and Twitter sentiment analysis on the whole. We showcase the importance of using Twitter features to helpthe model understand the sentiment involved and hence to predict the most suitable emoji for the text. To further understand emoji behavioral patterns, we propose a more balanced dataset by crawling additional Twitter data, including timestamp, hashtags, and application source acting as additional attributes to the tweet.

[Course Project]

Hashtags and Application Sources like Android, etc. are two features which we found to be important yet underused in emoji prediction and Twitter sentiment analysis on the whole. We showcase the importance of using Twitter features to helpthe model understand the sentiment involved and hence to predict the most suitable emoji for the text. To further understand emoji behavioral patterns, we propose a more balanced dataset by crawling additional Twitter data, including timestamp, hashtags, and application source acting as additional attributes to the tweet.

[Course Project]

Predicting Crop Price Trends

Farmer suicides have become an urgent social problem that governments around the world are trying hard to solve. Most farmers are driven to suicide due to an inability to sell their produce at desired profit levels, which is caused by the widespread uncertainty/fluctuation in produce prices resulting from varying market conditions. To help the farmers with the issue of produce price uncertainty, this paper proposes a deep learning algorithm for prediction of future produce price trends (Increase, Decrease, Stable) based on past pricing and volume pattern.

[PAPER]

Farmer suicides have become an urgent social problem that governments around the world are trying hard to solve. Most farmers are driven to suicide due to an inability to sell their produce at desired profit levels, which is caused by the widespread uncertainty/fluctuation in produce prices resulting from varying market conditions. To help the farmers with the issue of produce price uncertainty, this paper proposes a deep learning algorithm for prediction of future produce price trends (Increase, Decrease, Stable) based on past pricing and volume pattern.

[PAPER]

Automatic Caption Generation for Twitter Disaster Scene

Twitter is a mainstream social media platform for users to share information. Inparticular, during the disaster, there are large volume of tweets posted on Twitter with various kinds of contents. Fortunately, Twitter provides api that allows users to crawlthe images and captions from their database. Based on the given data, we proposed an image captioning model to generate textual descriptions for disaster-related images.

[Course Project]

Twitter is a mainstream social media platform for users to share information. Inparticular, during the disaster, there are large volume of tweets posted on Twitter with various kinds of contents. Fortunately, Twitter provides api that allows users to crawlthe images and captions from their database. Based on the given data, we proposed an image captioning model to generate textual descriptions for disaster-related images.

[Course Project]